A new system lets robots read human brain signals to detect mistakes early and react in real time, reducing delay and improving control in critical tasks.

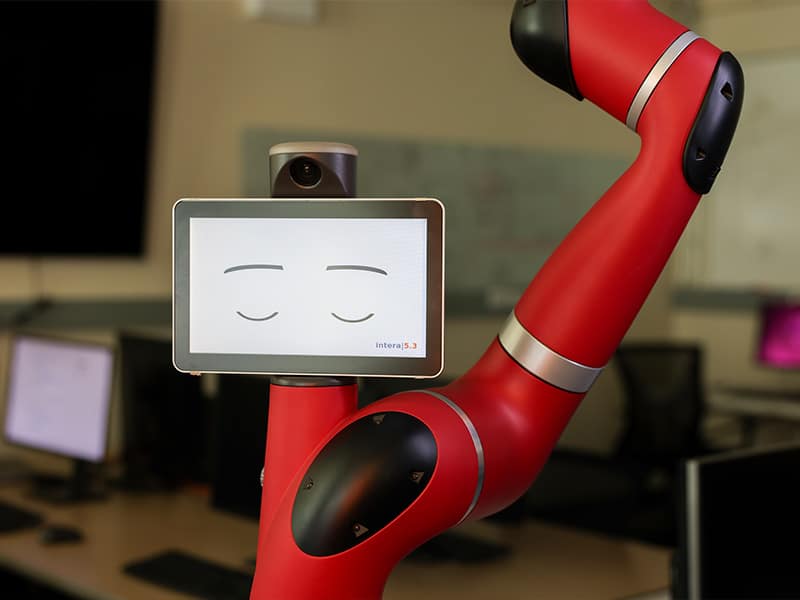

Robots usually react after a mistake happens. A team at Oklahoma State University is working on a system that lets robots respond the moment a human senses something is wrong. The system reads brain signals and changes robot actions in real time. If a person detects a problem, the robot can slow down, stop, or give back control within milliseconds. This shifts robot response from delayed correction to early intervention.

It works by using brain computer interfaces to detect error related potentials, or ErrPs. These signals appear almost instantly when a person recognizes a mistake, before any physical action. A wearable electroencephalogram cap captures these signals and sends them to a shared control robot.

This approach addresses a key gap in teleoperation. In high risk work like nuclear site handling or deep sea inspection, robots cannot operate fully on their own. Human control helps, but it takes time, and fast failures are hard to stop. Most robots detect issues only after contact. By then, the response may be too late. Brain signals act as an early warning.

The signals come from the brain’s anterior cingulate cortex, which produces ErrPs as an internal alert. Since the brain reacts faster than physical movement, this gives a short but critical time window for correction.

To make the system usable, the team built a model that learns general brain patterns and then adapts to each user. This reduces setup time, which is a common issue in brain computer systems. Since signals vary across users, fast adaptation is required.

Safety is managed using Signal Temporal Logic, which sets limits on how the robot can act. The brain signal flags a problem, and the logic defines the allowed response. This keeps control stable even with direct brain input.

The system is being tested using NVIDIA Isaac Lab and NVIDIA Isaac ROS on RTX PRO 6000 GPUs for real time simulation and control.

The same idea can extend beyond industrial use. In healthcare, it could support prosthetics and exoskeletons. For example, a prosthetic limb could detect when a user senses a wrong movement and correct it immediately.