When researchers asked more than 1,000 Americans to assign colors to robots according to the robot’s job, they found that biases familiar from the human workplace resurfaced—and that the people making the choices rarely recognized them as biases. The patterns were strong enough to predict which robot would be picked for which role, yet participants explained themselves in the neutral language of practicality, not prejudice. As humanoid machines move from research labs onto factory floors and into hospitals, that gap between what people choose and what people think they’re choosing is precisely what worries the researchers: a workforce of robots could end up sorted by the same hierarchies that sort the human one, with no one willing to call it that.

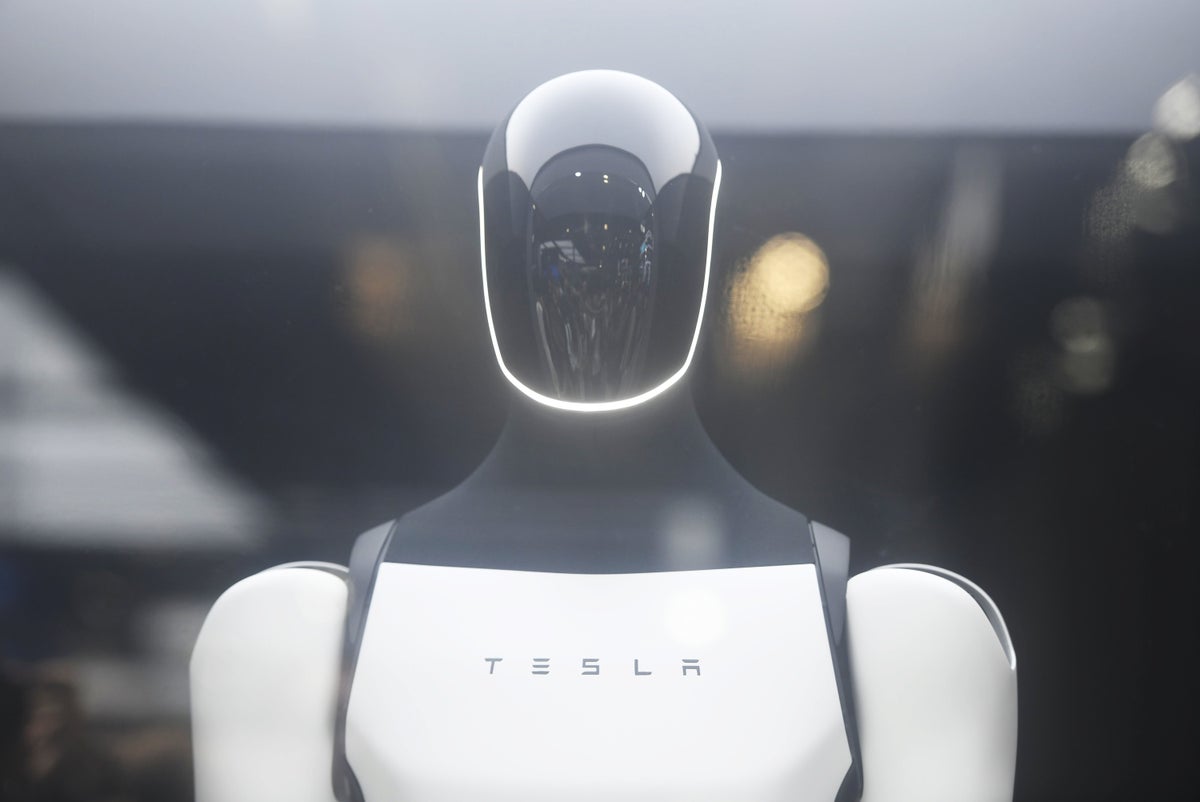

The study, published in conference proceedings in March 2026 by researchers Jiangen He, Wanqi Zhang and Jessica K. Barfield, joins a growing body of robotics research that often disagrees about whether people perceive robots as having a race at all. It also arrives at a time when questions about humanoid robot design are about to stop being academic. Tesla CEO Elon Musk says he will convert part of a factory in Fremont, Calif., to produce its Optimus robots. Chinese firms such as Unitree Robotics are shipping backflipping robots to consumers, and Figure AI’s humanoids are working on BMW assembly lines. “Assigning appearance to a social robot is never a purely aesthetic choice,” He, Zhang and Barfield write in a paper posted to the preprint server arXiv.org that expands on the study. “It is a profound socio-technical intervention requiring intentional ethical design.”

For the study, the researchers recruited participants through the survey platform Prolific and showed each of them four workplace scenes without any human figures: a construction site, a hospital, a home tutoring setup and a sports field. For every scene, participants picked one robot from a lineup of six that differed only in color—there were four skin tones ranging from light to dark, plus a silver and a teal option meant as nonracial baselines. Roughly half chose silver or teal across the scenarios. But when participants selected a skin-toned robot, the results tracked with stereotypes that researchers have documented between Latinos and manual labor, Asians and academic competence, Black people and athletic ability, and white people and professional roles. In a second experiment with a different group of participants, the researchers added human professionals—a Latino construction worker, a white doctor, an Asian tutor and a Black athlete—to the same scenes. The bias sharpened: those participants were nearly six times more likely than the first group to pick a robot whose skin tone matched the worker they had just seen.

On supporting science journalism

If you’re enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

The team also asked participants to explain their robot color choices. “We wanted to dig in deeper to the reasons why certain robots were chosen for certain positions,” says Barfield, a researcher at the University of Kentucky. Many participants justified white robots for health care settings because they looked cleaner and dark robots for construction because they were less likely to show dirt, the researchers report in their arXiv.org preprint. A different pattern emerged when the researchers zoomed in on the moments in which participants happened to pick a robot whose skin tone matched their own for a job. White and Asian participants tended to reach for psychological and affective reasoning, saying that the robots made them feel calm or that they personally liked the color. By contrast, Black participants who selected dark-skinned robots gave functional justifications. “They would say, ‘Oh, this robot looks stronger or looks more useful’—this kind of more functional reason,” says He, a researcher at the University of Tennessee, Knoxville.

In what is called racial mirroring, people have a tendency to feel affective resonance with agents that look like them, the researchers explain in the preprint. The finding suggests that mirroring is not a universal experience. For Black participants, the researchers argue, choosing a dark-skinned entity in a society with systemic anti-Black biases is structurally different from choosing a light-skinned one. Black participants tended to reach instead for the language of competence or functional justification. “The lack of affective mirroring from Black participants may reflect historical realities where darker skin has been systematically stripped of ‘warmth’ in cultural narratives, forcing a heavier reliance on ‘competence,’” they write.

Across both studies and every job scenario, the silver and teal robots were the most popular picks—chosen more often, on average, than any individual skin tone. As robots became more humanlike, participants’ explanations increasingly framed those neutral colors as industrial or practical. “When the robot is getting more humanlike, people try to avoid making this kind of sensitive choice,” He says.

These are not the first studies to suggest that people show racial bias toward robots. In a 2018 study, Christoph Bartneck, a human-computer interaction researcher at the University of Canterbury in New Zealand, and his colleagues adapted a well-known psychological tool called the shooter bias paradigm. In the classic version, participants play a kind of video game in which human figures—some Black, some white—are holding either guns or harmless objects. You have a split second to decide: shoot or don’t shoot.

In Bartneck’s study, participants were quicker to shoot armed Black targets than armed white ones and quicker to withhold fire from unarmed white targets compared with unarmed Black ones. Bartneck and his colleagues then swapped in robots “to see whether this works for robots as well,” he says. Participants in the study responded to dark-skinned robots the same way they did to Black humans in the video game scenario.

In a follow-up study, the researchers introduced a brown-skinned robot alongside the dark- and light-skinned ones, and the bias disappeared. The world, it turned out, was harder to sort when it wasn’t binary.

Robert Sparrow, a philosopher at Monash University in Australia who is among the first to have written about the ethics of robotics, argues that robots carry two competing racial narratives. The first is symbolic. The word “robot” comes from Czech writer Karel Čapek’s play R.U.R. (Rossum’s Universal Robots), published in 1920 and first performed in January 1921, in which the robots were organic beings created as forced laborers—the Czech word robota means forced labor. “They were very clearly a stand-in for workers,” Sparrow says. The fears of robot uprising, from his perspective, are essentially the fears of slave revolt, of the working class seizing the means of production. Čapek’s robots weren’t depicted with dark skin, Sparrow says, but they occupied the cultural position of an enslaved underclass—coded, in his reading, as the racialized labor of the era. “So robots, right from the start, represent the sort of oppressed underclass of racialized workers,” he says.

The second narrative, the one that came later—with science fiction reinventing the robot as gleaming, futuristic, aspirational—built a future that, as imagined by European and American science fiction writers, was white. “The sort of classic Asimov-period science fiction—people just imagined that the ‘highest races’ are going to colonize the stars,” Sparrow says. Engineers who grew up on those stories then built the machines they had seen on-screen. “It’s got to look like it comes from the future,” Sparrow says. “What does the future look like? It’s what science fiction tells us.” And science fiction, for most of the 20th century, told us the future looked like white people in sleek environments. The Anthropomorphic Robot Database—a photographic catalog of humanoid robots from labs around the world—is, in Sparrow’s description, “a wall of white.”

Yet Sparrow is honest about the limits of the evidence. “The scientific literature doesn’t speak with one voice on this topic,” he says. Some researchers have looked for racialized responses to robots and found nothing. In 2022 researchers Jaime Banks and Kevin Koban published a study in which dark or light skin tones and stereotypically male or female traits had what they called “scant influence” on stereotyping a humanoid robot. Participants in the study seemed to be stereotyping robots as robots—slotting them into a category of “nonhuman agent.”

Lionel Obadia, a cultural anthropologist at the University of Lyon 2 in France, who studies human-robot interaction across Europe and Asia, is skeptical of racial stereotyping on humanoid robots. In his ethnographic fieldwork—observing real humans interacting with real robots in natural environments, rather than online experiments with images—race has not surfaced as a significant factor. “Racism is much more a human problem rather than a robotic one,” Obadia says, cautioning that “from the lab to real life, from images to embodied robots, from online questionnaires to empirical observation,” the findings may not survive the journey.

But Obadia’s deeper objection is about universalism. He argues that the discussion is overdetermined by American frameworks: the studies by He, Zhang and Barfield were conducted with U.S. participants in a specifically U.S. racial context, and Obadia does not think their findings can be generalized cleanly to robots or human-robot interaction elsewhere in the world.

Tesla’s humanoid Optimus robot reveals that scholars can disagree. Optimus is mostly white but has a black head and significant black paneling. In 2021, when Tesla unveiled its concept for what would become Optimus, some critics saw the problematic racial coding. Digital ethics expert Davi Ottenheimer argued that the presentation evoked both blackface and the fantasy of a controllable Black servant. Edward Jones-Imhotep, a historian of science and technology, also told WIRED that he sees a link between the humanoid and that racist phenomenon.

Sparrow says he thinks he reads the Optimus design as white. “But I suspect there’s actually been a conscious design choice there not to make it all white, in order for it to be defensible,” he adds.

Obadia sees the Optimus question as evidence of how the racial framing itself distorts perception. “I suspect this is linked to the overemphasis on robots’ color to race and finally racism: it can lead to a surprising distortion of the perception of color of robots and see them all [as] white.”

Bartneck also warns against pushing the argument too far. “What we have to be careful about is sensationalism,” he says. “Not everything is about race. Nobody cares about the color of your washing machine.”