Imagine learning to operate a piece of machinery you’ve never previously touched, not through a tutorial, but through your own hands electrically guided through the right motions. That’s the core idea behind an AI-powered suit created by researchers from the University of Chicago.

The system was developed by PhD students Yun Ho and Romain Nith under researcher Pedro Lopes at the University of Chicago’s Human Computer Integration Lab (HCintegration). It combines a wearable electrode suit, smart glasses with a built-in camera, a motion-tracking layer, and a multimodal AI model capable of processing both vision and language, the same class of technology as GPT-4.1. The suit physically moves a user’s muscles in real time, adapting to whatever task is in front of them, with no pre-programmed routine required.

“This could be a game-changer, not only for tasks that are highly physical (such as learning physical skills required for working with manufacturing and materials or learning musical instruments) but also in situations where users might be situationally impaired,” said Lopes.

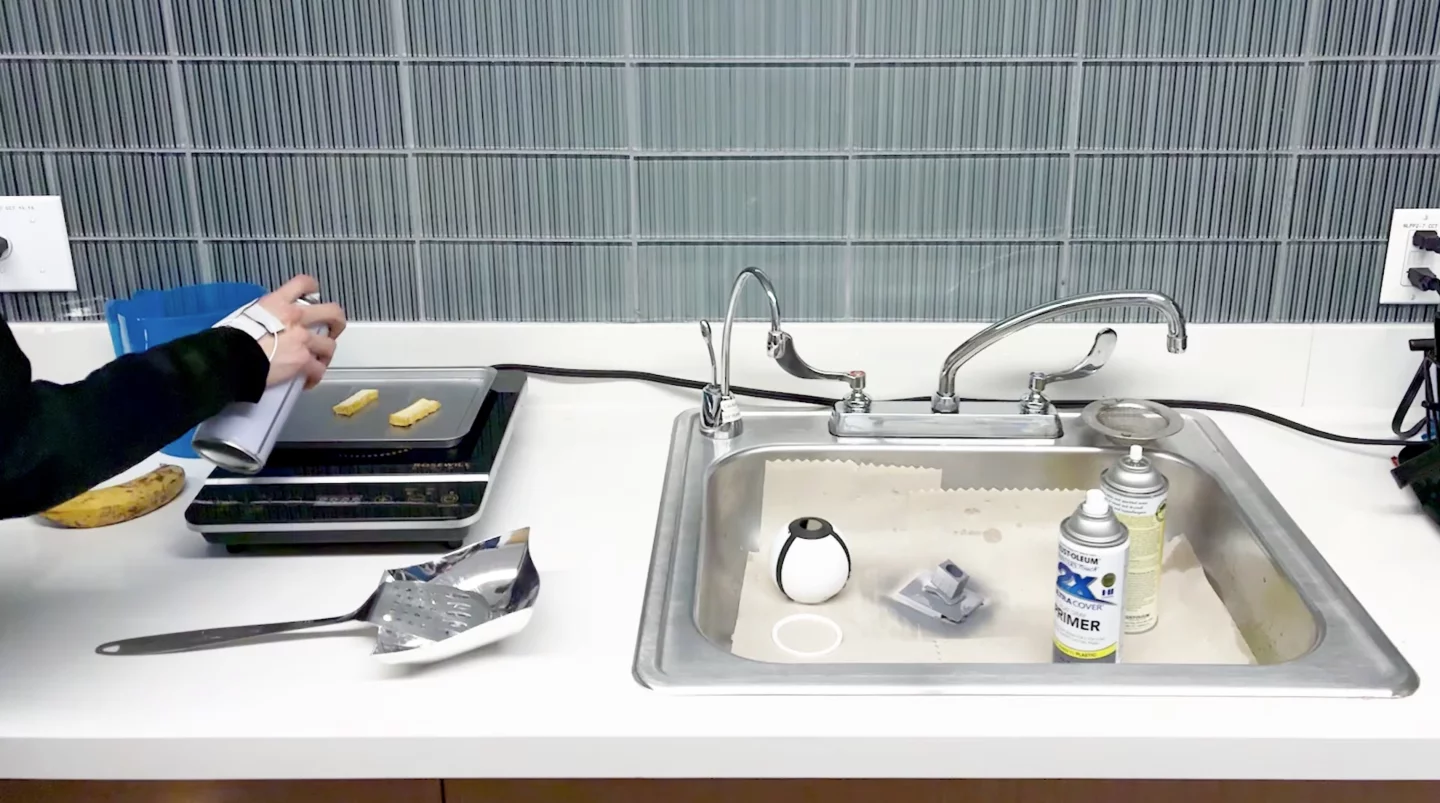

YouTube/HCintegration – University of Chicago

The technology is built on electrical muscle stimulation (EMS), a technique that sends low-level electrical pulses to specific muscles to trigger movement. EMS has been used for years in physical rehabilitation, teaching piano sequences, and sign language training. But earlier systems were essentially fixed scripts. Program one to shake a spray can, and it would do it reliably. Show it a cooking oil spray that doesn’t need shaking, and it would shake it anyway. Context was invisible.

The new system can reason about context. Smart glasses capture what’s in front of the user, the motion-tracking suit reads their posture in real time, and the AI processes all of that to generate movement instructions tailored to the specific moment. It decides which joint to move, in which direction, and in what order.

A fourth layer, an anatomical safety filter, sits between the AI and the body. If the model instructs a wrist to rotate 180 degrees – physically impossible without injury – the system automatically redistributes that motion across multiple joints. In lab tests, the suit made significantly fewer errors than a basic AI model without this body-awareness layer.

In practice, using the suit is simpler than it sounds. A user approaches an unfamiliar window, says “EMS, help me open this,” and the system identifies the handle type and electrically guides their fingers, wrist, and elbow through the correct sequence.

Generative Muscle Stimulation: Physical-AI by Constraining Multimodal-AI with Embodied Knowledge

The team outlines three near-term use cases. In rehabilitation and physiotherapy, the suit could guide patients through safe movements at home without constant supervision. In industrial settings, it could cut training time and reduce injury risk when workers encounter unfamiliar machinery. For blind people or those with low vision, it could offer direct physical orientation, not an audio description but an actual guided movement through an unfamiliar environment.

In user tests, when the system deliberately made errors, participants caught them, corrected them with verbal instructions, and completed the task anyway. One participant noted that the body’s own intuition made the mistakes immediately obvious, a promising indicator that humans can stay genuinely in the loop.

Despite how impressive the technology is, researchers acknowledge that there is still plenty of room for improvement. Electrode calibration needs to be personalized to each body. The tingling sensation from EMS can be uncomfortable, and the system doesn’t yet build true muscle memory – the kind of deep, practiced skill that only comes from repetition.

YouTube/HCintegration – University of Chicago

The technology also has a worrying dystopian side. A suit that can move your body is, in theory, a suit that could be hijacked. Making the system hackproof is a prerequisite for any serious real-world deployment. And, so far, no commercial timeline has been announced.

“While we are really excited about our system, it is clearly just the first step; much more needs to happen,” said Lopes. “Currently this is not something you can just wear in your everyday life but more of a superhero suit that researchers are experimenting with in the lab.”

The project just took home the Best Paper Award at ACM CHI 2026, the world’s largest human-computer interaction conference, which opens today in Barcelona. You can read the paper in full on the ACM Digital Library.

Source: University of Chicago