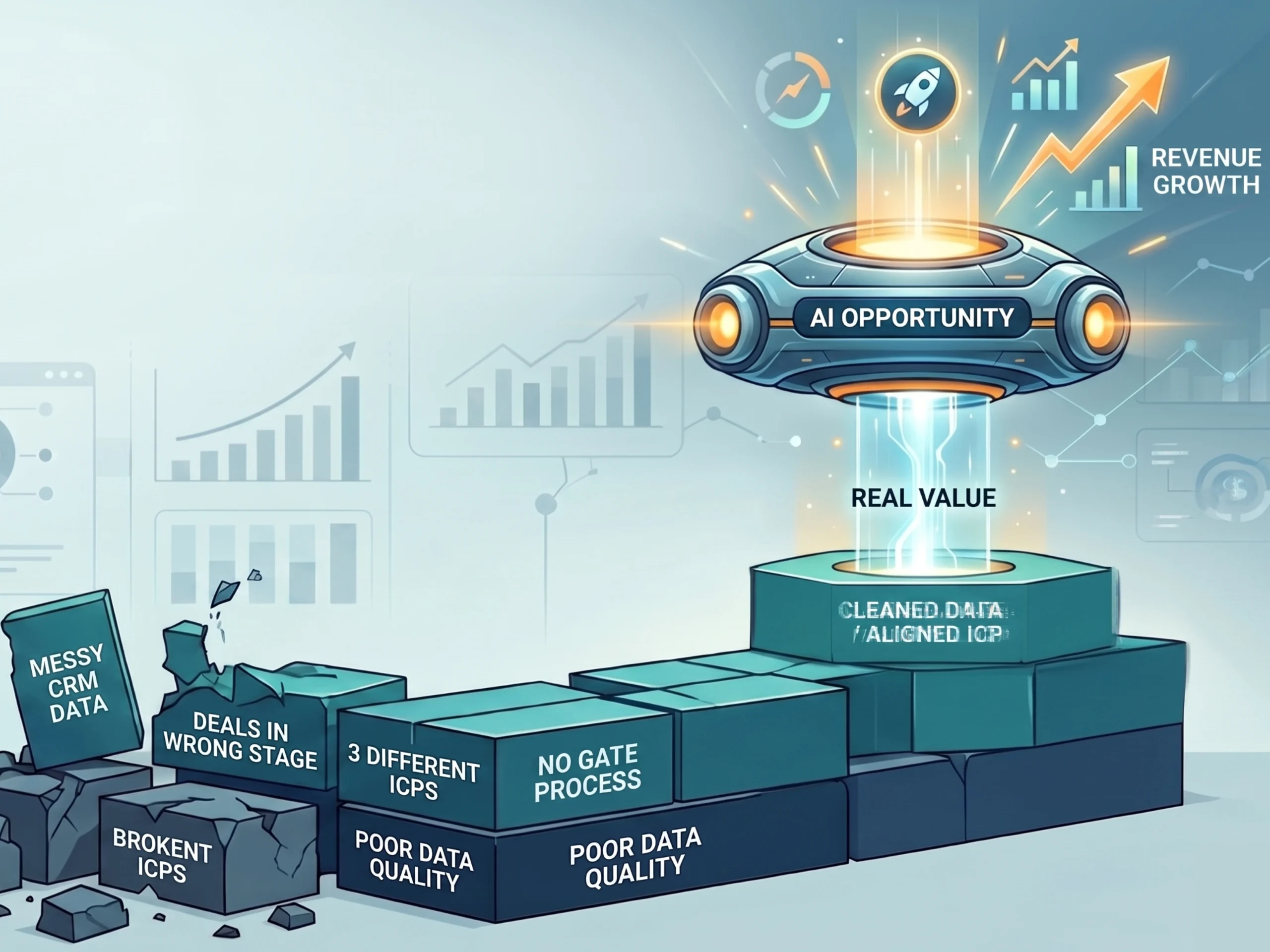

Every go-to-market team wants AI. Very few have the data to back it up.

That’s not cynicism.

We surveyed value creation teams across the industry and the findings were pretty telling: nearly three-quarters said generative AI would have the greatest impact on value creation over the next three years, yet 35.8% say it’s currently the most underserved area in their organizations.

The biggest opportunity and the biggest gap, in the same breath.

These teams aren’t lacking ambition. They’re running on fumes and trying to bolt AI onto infrastructure that was never built for it.

Amy Kramer, Operating Partner for Go-to-Market at Level Equity, said it plainly on our State of the Industry discussion on value creation.

“A lot of companies are so excited to leverage AI and think about what they can use, and I say… we don’t even have basic processes and data. We’re not there yet.”

Before you swipe the credit card on that shiny new AI platform, read this.

The Foundation Problem Nobody Wants to Talk About

Your AI stack is only as smart as the data feeding it. And for most growth-stage B2B companies, that data is a mess.

Deals sitting in the wrong pipeline stages. No gate processes enforcing progression. “Closed lost” that hasn’t been touched in six months. These aren’t minor housekeeping issues. They’re the kind of structural problems that make every AI-powered forecast, every automated nurture sequence, and every pipeline health score basically useless.

Amy told a story on the webinar that’s going to sound familiar to a lot of operators. A portfolio company is testing AI tools across the revenue stack, moving fast, feeling sharp. She asked about their core KPIs and testing framework. The answer was gut feel. “We’re moving so fast,” they said. That’s not a technology problem. That’s a process problem wearing a technology costume.

According to IBM, poor data quality costs U.S. businesses $3.1 trillion annually. For a growth-stage SaaS company, it shows up differently: inflated CAC, missed expansion signals, AI tools that confidently surface the wrong answers because nobody cleaned the training data.

The ICP Alignment Issue

Even when CRM hygiene is solid, there’s another problem lurking. Three teams, three different definitions of the ideal customer.

Blake Tiemeyer, Director of Growth Acceleration at General Atlantic, sees it all the time. “I can’t tell you how many times we’ll talk to individuals where marketing has their own version of an ICP, sales has their own version of an ICP, and product has built something that no one even knew was rolling out.”

Think about what that means for an AI-powered scoring model. It’s doing exactly what you told it to do. The problem is that “you” is actually three different people with three different answers.

Leads get scored against the wrong criteria. Sequences get triggered for the wrong personas. Pipeline looks healthy until the deal desk gets involved and everyone realizes they’ve been talking about different customers all along.

Getting ICP alignment on paper before you turn any AI tool on isn’t a marketing exercise. It’s the only way any of this works.

System of Record vs. System of Action

Not all tools carry the same risk, and treating them the same is where companies slow themselves down unnecessarily.

Amy draws a line between the two. Your system of record needs security review, data governance, real scrutiny before anything touches it. Your system of action, the tools teams are experimenting with day-to-day, can move faster once the guardrails are in place.

“We want to empower users, once it goes through that security review, to just test and play with them themselves versus necessarily having to go through RevOps to deploy it,” she said. “If it’s not going to touch our core infrastructure, let’s move.”

That framework matters because it gives teams actual permission to experiment without the whole organization becoming a bottleneck. RevOps doesn’t need to approve every trial. But they absolutely own the system of record decisions.

Blake’s take: go-to-market tech should live within RevOps, with a dotted line to the security team, especially at the $20M to $100M ARR stage where one wrong configuration change ripples across the entire stack.

You Can’t Fix What You Can’t See

Here’s the real business case for doing the foundation work before buying anything new.

You can’t identify leakage, justify an AI investment, or build any kind of improvement roadmap without seeing the full funnel.

Blake put it directly: “Now that we see the full funnel visibility, we see where the leakage is. Actually, now we can build the business case of what are we trying to solve. We’re trying to solve this leakage at this one exact point. How could AI potentially help us do that? But if you don’t have your arms around the full funnel, you’re not going to be able to have those really in-depth conversations.”

Most teams get this backwards. They buy the tool and then figure out what problem it’s solving. The right order is boring but it works: establish visibility, find the leak, form a hypothesis, pick the tool, define what success looks like, run the test. Inbound automation, AI SDRs, call intelligence, data enrichment. All of these can deliver. But not when they’re pointed at a funnel nobody fully understands yet.

Worth noting: GTM is carrying an enormous amount of weight right now.

In our survey, 74.6% of value creation teams spend the most time there, 67.2% rank net new pipeline as their top priority, and 44.8% say GTM has driven the most enterprise value over the past two years.

That pressure makes the temptation to reach for AI tools even stronger. It also makes a broken funnel even more costly.

Practical Steps Before You Buy the Next Tool

There’s no shortcut here, but the steps aren’t complicated.

- Define your core KPIs and actually enforce them. RevOps needs to own stage definitions, conversion benchmarks, and activity standards. If different teams are reporting on pipeline differently, you don’t have a shared view of the business and you definitely don’t have reliable AI inputs.

- Build gate processes and make them stick. A deal shouldn’t move from discovery to proposal without meeting defined criteria. Every bypassed gate is a corrupted data point, and corrupted data points compound fast.

- Get ICP alignment in writing before a single scoring model goes live. That means a real cross-functional working session with marketing, sales, and product. Document it. Put it in the CRM.

- Audit your existing tech stack before adding anything new. Amy caught a portfolio company that had bought data orchestration tools when what they actually needed was enrichment. Understand what you have first.

- Test with actual frameworks. Clear hypothesis, control group, defined success metric, real timeline. “We’re learning” is not a framework. Fast iteration requires structure to mean anything.

The Bottom Line

The pattern we keep seeing is that execution challenges are showing up in uncomfortable places.

None of that gets easier when you’re also trying to evaluate 20 AI tools at once.

AI amplifies what’s already there. Clean data, aligned teams, and visible funnels get faster and sharper. Messy data, siloed definitions, and invisible leakage get louder and more expensive.

The unsexy work of getting the foundation right isn’t a detour from the AI opportunity. It’s the path to it.

At York IE, we help growth-stage companies build this foundation across revenue operations, go-to-market strategy, and data infrastructure, so that when AI tools come into the picture, they’re multiplying real signal rather than magnifying noise.

To sum it up, Amy and Blake both call this the most important, and most overlooked, investment a company can make right now. If you want to hear the full conversation, watch the webinar here.